Jevons Paradox Comes For Marketing

Why falling AI token prices will make your marketing token bill bigger and what to do about it

In 1865, the economist William Stanley Jevons observed something counterintuitive about the British coal industry. Steam engines were becoming dramatically more efficient. Every new generation used less coal per unit of work. The common sense conclusion was that Britain would therefore burn less coal.

It burned far more. Cheaper, more efficient engines made coal economically viable for applications no one had previously considered. Consumption outran efficiency. Britain's coal bill went up, not down.

The same thing is happening right now to AI tokens, and most marketing leaders haven't priced it into their 2026 plans.

Three Forces

The numbers tell a simple, uncomfortable story.

Unit prices are falling fast. Frontier model pricing has dropped 60–67% in the last twelve months. Claude Opus went from $15/$75 per million tokens to $5/$25. OpenAI's flagship dropped by a similar proportion. Gartner forecasts another 90%+ decline by 2030. Any procurement leader looking at these curves would conclude that AI is getting cheap.

Unit volumes are rising faster. OpenAI's 2025 State of Enterprise AI report found that API token volume grew 8x year-over-year, and reasoning token consumption per organization grew 320x. Gartner estimates that agentic workflows, the direction every vendor is pushing; consume 5x to 30x more tokens per task than a standard chatbot interaction. Deloitte's 2025 survey of 3,235 enterprise leaders found that only 7% of organizations report AI "fully scaled." If Deloitte has identified an accurate signal then ninety three percent of the consumption growth is still ahead of us.

The billing model that captures value is up for grabs. WPP publicly told investors it is moving away from hours based pricing. McKinsey disclosed in late 2025 that roughly 25% of its global fees now come from outcome based pricing. HubSpot shifted its Breeze Customer Agent from $1.00 per conversation to $0.50 per

resolved conversation. No one has figured out the right answer yet.

Stack these together and the implication is clear: falling per token prices will be swamped by rising consumption, and the revenue model that used to work, billing time, is breaking as AI efficiency increases. The winners in this environment aren't the ones with the cheapest model access.

They're the ones who treat tokens as a managed P&L line and price their work around outcomes.

Here's how that plays out for three different kinds of marketing leaders.

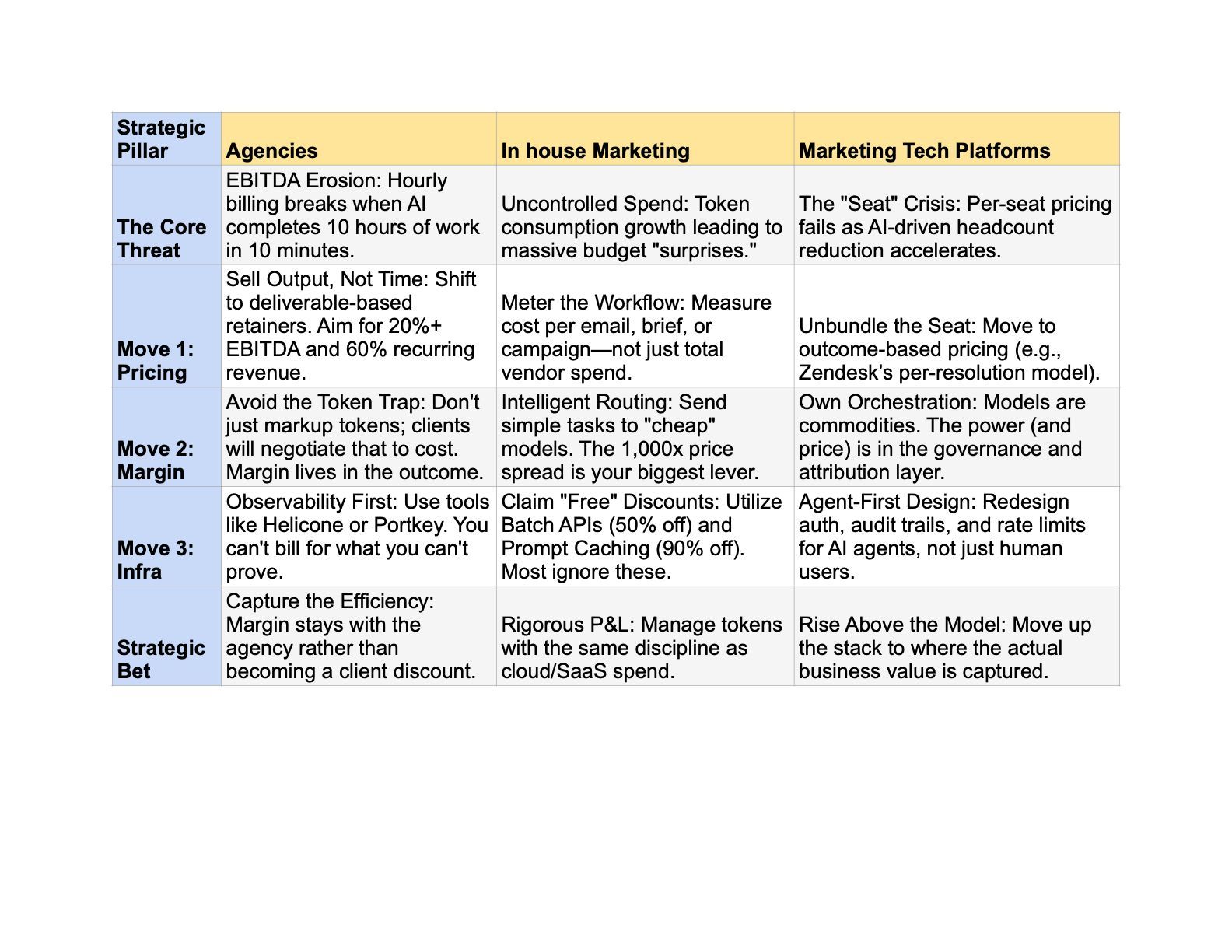

If you run an agency: your EBITDA is under active attack

The math of hourly billing breaks the moment AI shaves meaningful time off delivery. If your team can now produce a campaign in two days instead of two weeks, and you bill by the hour, your revenue just fell 80% for identical client value. SPI Research's 2025 benchmark — covering 403 professional services firms representing nearly $60 billion in revenue — found industry EBITDA fell to 9.8%, a five-year low. Utilization dropped to 68.9%, below the 75% threshold needed for healthy margins.

This is not a cyclical dip. It is the first visible effect of AI efficiency being absorbed as a revenue hit rather than a margin expansion, by agencies that haven't changed how they charge.

Three moves protect agency EBITDA in 2026:

- Shift retainers from "hours committed" to "deliverables committed." The agencies hitting 20%+ EBITDA in the current benchmark data share a common trait: recurring revenue above 60% of total, priced to deliverables rather than staffing levels. When you deliver faster, the margin flows to you, not back to the client as a discount.

- Stop pretending token costs are a line item. The temptation to pass through AI costs with a markup the "principal media" model applied to tokens is a trap. Clients will negotiate token pass-throughs to cost recovery within a year. Margin has to come from outcomes, not infrastructure markup.

- Invest in the observability layer before you need it. You cannot credibly bill a client for AI-enabled work if you can't show them what was consumed on their behalf. Platforms like Helicone and Portkey, tag every API call with client, project, and campaign metadata. Agencies without this infrastructure are flying blind into client conversations that will get increasingly pointed.

If you run in house marketing at a brand: start treating tokens like cloud spend

The 2020 playbook for cloud costs now applies to AI tokens. The finance, engineering, and marketing teams that went through that transition already know what comes next: a period of uncontrolled growth, a budget surprise, a scramble for observability, and eventually the emergence of an "AI FinOps" function.

You can skip the surprise. The decisions that matter are not technical, your engineering partners handle implementation. The decisions that matter are about what you choose to meter and what you choose to automate.

- Meter by workflow, not by tool. A CMO who knows "we spend $40,000 a month on Claude" has no actionable information. A CMO who knows "personalized email generation costs us $0.014 per email across 2.1 million sends, and our agentic campaign-brief workflow costs $0.36 per brief across 1,800 briefs" has a P&L they can optimize. Demand that level of attribution from your vendors and your internal team.

- Match the model to the task. Most marketing work does not need a flagship model. Classification, tagging, first-draft subject lines, routine personalization, and sentiment analysis run perfectly well on Haiku, Gemini Flash, or Mistral Small at a fraction of the cost of Opus or GPT-5.4. The most expensive OpenAI model costs a 1,000× spread to the most basic.. Routing simple tasks to cheap models and reserving flagship intelligence for complex reasoning is a bigger cost lever than every caching and batching trick combined.

- Claim the free discounts. The Anthropic Batch API offers 50% off for any workload that tolerates a 24-hour turnaround. Prompt caching on Claude cuts cached input costs by 90%. Stacked together, they yield roughly a 95% discount on workloads like overnight sentiment analysis, bulk content generation, and embedding refreshes. Most marketing teams aren't using either. This is revenue sitting on the floor.

If you run a marketing tech platform: per seat pricing is your vulnerability

In February 2026, Anthropic launched Claude Cowork. In a single trading day, the market wiped $285 billion off enterprise software valuations. By mid month the cumulative destruction reached roughly $1 trillion. Salesforce, Workday, ServiceNow, Atlassian, Adobe, and HubSpot all dropped in unison.

Goldman Sachs equity research later argued the selloff was overdone, but the underlying investor thesis is worth taking seriously: AI reduces the headcount that uses marketing software, and per-seat pricing is directly exposed to that reduction. The platforms that are adapting are doing three things at once.

- They are unbundling their pricing. Salesforce now offers three parallel models: seats, Flex Credits at $0.10 per action, and per-user agent licenses. HubSpot shifted its AI Customer Agent to per-resolution pricing. Zendesk launched Automated Resolutions at $1.50 each. The companies winning the AI transition aren't abandoning seats: they're offering outcome-based pricing alongside seats and letting customers self select.

- They are owning the orchestration layer, not just the model access. The model vendors (OpenAI, Anthropic, Google) are commodifying faster than anyone predicted. What isn't commodifying is the gateway, the observability, the attribution, and the governance that sits above the models. Platforms that own this layer capture pricing power regardless of which underlying model wins.

- They are building for agents as first-class users. Your next power users aren't humans clicking through a UI, they're AI agents calling your API on behalf of humans. The platforms that recognize this early are redesigning rate limits, authentication, audit trails, and pricing tiers around agentic access patterns. The three moves form a coherent sequence: unbundling pricing buys time, owning orchestration provides defensibility, and building for agents is where the market is actually heading.

The common thread

For all three audiences, the instruction is the same: stop treating AI as a technology investment and start treating it as a unit-economics problem.

The agencies, brands, and platforms that come out ahead in 2026 and 2027 will not be the ones with the best prompts or the most impressive demos. They will be the ones who did the boring work of instrumenting their token consumption, routing traffic intelligently, batching what can wait, caching what repeats, and most importantly pricing their output in units that survive a 90% efficiency gain.

Jevons figured this out with coal in 1865. The lesson didn't change. Cheaper inputs expand markets, and expanded markets consume more, not less. The marketing leaders who plan for rising bills despite falling prices will end 2027 with expanded margin. The ones who assume the falling prices will save them will end 2027 explaining to their boards what happened.

About the author

Jon Louis is a marketing leader who has built brands and top performing teams across technology, healthcare, and professional services organizations.